By Anja Kaspersen, Kobi Leins & Wendell Wallach

When George Orwell coined the term “totalitarianism,” he had not lived in a totalitarian regime but was merely imagining what it might look like. He referred to two primary traits of totalitarian societies: one is lying (or misinformation), and the other is what he called “schizophrenia.” Orwell wrote:

“The organised lying practiced by totalitarian states is not, as it is sometimes claimed, a temporary expedient of the same nature as military deception. It is something integral to totalitarianism, something that would still continue even if concentration camps and secret police forces had ceased to be necessary.”

Orwell framed lying and organized lying as fundamental aspects of totalitarianism. Generative AI models, without checks and guardrails, provides an ideal tool to facilitate both.

Similarly, in 1963, Hannah Arendt coined the phrase “the banality of evil” in response to the trial of Adolf Eichmann for Nazi war crimes. She was struck by how Eichmann himself did not seem like an evil person but had nonetheless done evil things by following instructions unquestioningly. Boringly normal people, she argued, could commit evil acts through mere subservience—doing what they were told without challenging authority.

We propose that current iterations of AI are increasingly able to encourage subservience to a non-human and inhumane master, telling potentially systematic untruths with emphatic confidence—a possible precursor for totalitarian regimes, and certainly a threat to any notion of democracy. The “banality of evil” is enabled by unquestioning minds susceptible to the “magical thinking” surrounding these technologies, including data collected and used in harmful ways not understood by those they affect, as well as algorithms that are designed to modify and manipulate behavior.

We acknowledge that raising the question of “evil” in the context of artificial intelligence is a dramatic step. However, the prevailing utilitarian calculation, which suggests that the benefits of AI will outweigh its undesired societal, political, economic, and spiritual consequences, diminishes the gravity of the harms that AI is and will perpetuate.

Furthermore, excessive fixation on AI’s stand-alone technological risks detracts from meaningful discussion about the AI infrastructure’s true nature and the crucial matter of determining who holds the power to shape its development and use. The owners and developers of generative AI models are, of course, not committing evil in analogous ways to Eichmann who organized the discharge of inhumane orders. AI systems are not analogous to gas chambers. We do not wish to trivialize the harms to humanity that Nazism caused.

Nevertheless, AI is imprisoning minds, and closing (not opening) many pathways for work, meaning, expression, and human connectivity. “Our Epidemic of Loneliness and Isolation” identified by the U.S. Surgeon General Vivek H. Murthy as precipitated by social media and misinformation is likely to be exacerbated by hyper-personalized generative AI applications.

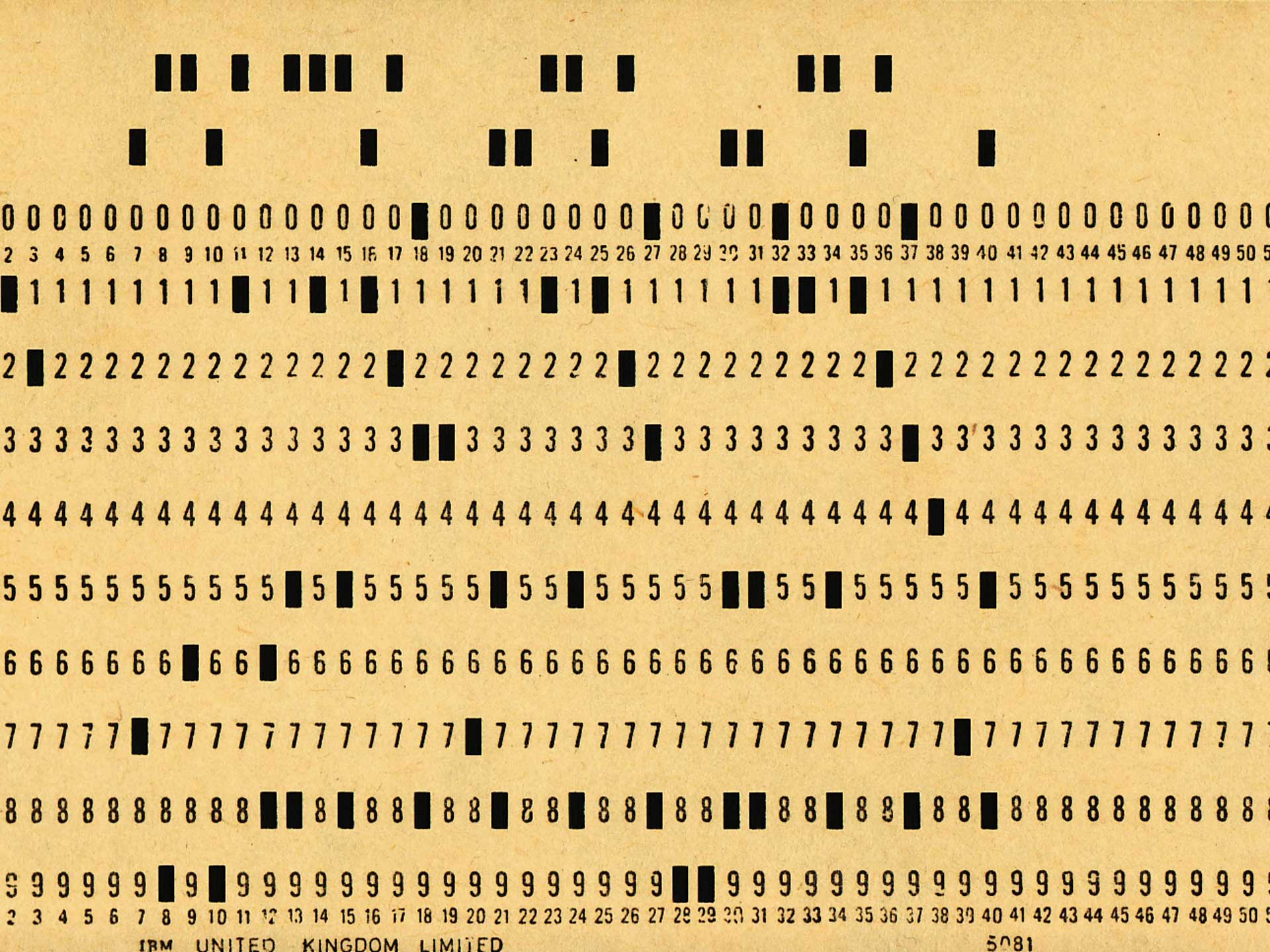

Like others who have gone before, we are concerned about the reduction of humans to ones and zeros dynamically embedded in silicon chips, and where this type of thinking leads. Karel Čapek, the author who coined the term “robot” in his play R.U.R, repeatedly questioned the reduction of humans to numbers and saw a direct link from automation to fascism and communism—highlighting the need for individualism and creativity as an antidote to an overly automated world. Yevgeny Ivanovich Zamyatin, author of We, satirized capitalist innovations that made people “machinelike.” He explained in a 1932 interview that his novel We is a “warning against the two-fold danger which threatens humanity: the hypertrophic power of the machines and the hypertrophic power of the State.” Conflicts fought with tanks, “aeroplanes,” and poison gas, Zamyatin wrote, reduced man to “a number, a cipher.”

AI takes automation one step further beyond production and with generative AI, into automating communication. The warnings about automation of Čapek, Zamyatin, and Arendt from last century remain prescient. As Marshall McLuhan noted, “We shape our tools, and thereafter, our tools shape us.” Automated language able to deceive based on untruths will shape us and have long-term effects on democracy and security that we have not yet fully grasped.

The rapid deployment of AI-based tools has strong parallels with that of leaded gasoline. Lead in gasoline solved a genuine problem—engine knocking. Thomas Midgley, the inventor of leaded gasoline, was aware of lead poisoning because he suffered from the disease. There were other, less harmful ways to solve the problem, which were developed only when legislators eventually stepped in to create the right incentives to counteract the enormous profits earned from selling leaded gasoline. Similar public health catastrophes driven by greed and failures in science include: the marketing of highly addictive prescription opiates, the weaponization of herbicides in warfare, and crystallized cottonseed oil that contributed to millions of deaths due to heart disease.

In each of these instances, the benefits of the technology were elevated to the point that adoption gained market momentum while criticisms and counterarguments were either difficult to raise or had no traction. The harms they caused are widely acknowledged. However, the potential harms and undesired societal consequences of AI are more likely to be on a par with using atomic bombs and the banning of DDT chemicals. Debate continues as to whether speeding up the end of a gruesome war justified the bombing of civilians, or whether the benefits to the environment from eliminating the leading synthetic insecticide led to dramatic increases in deaths from malaria.

A secondary aspect of AI that enables the banality of evil is the outsourcing of information and data management to an unreliable system. This provides plausible deniability—just like consulting firms are used by businesses to justify otherwise unethical behavior. In the case of generative AI models, the prerequisites for totalitarianism may be more easily fulfilled if rolled out without putting in place proper safeguards at the outset.

Arendt also less-famously discussed the concept of “radical evil.” Drawing on the philosophy of Immanuel Kant, she argued that radical evil was the idea that human beings, or certain kinds of human beings, were superfluous. Eichmann’s banality lay in committing mindless evil in the daily course of fulfilling what he saw as his bureaucratic responsibility, while the Nazi regime’s radical evil lay in treating Jews, Poles, and Gypsies as lacking any value at all.

Making human effort redundant is the goal of much of the AI being developed. AI does not have to be paid a salary, given sick leave, or have rights taken into consideration. It is this idealization of the removal of human needs, of making humans superfluous, that we need to fundamentally question and challenge.

The argument that automating boring work would free people to fulfill more worthwhile pursuits may have held currency when replacing repetitive manual labor, but generative AI is replacing meaningful work, creativity, and appropriating the creative endeavors of artists and scholars. Furthermore, this often contributes to the exacerbation of economic inequality that benefits the wealthiest among us, without providing alternative means to meet the needs of the majority of humanity. AI enabling the elimination of jobs is arguably evil if it is not accompanied by a solution to the distribution crisis where, in lieu of wages, people receive the resources necessary to sustain a meaningful life and a quality standard of living.

Naomi Klein captured this concern in her latest Guardian piece about “warped hallucinations” (no, not those of the models, but rather those of their inventors):

“There is a world in which generative AI, as a powerful predictive research tool and a performer of tedious tasks, could indeed be marshalled to benefit humanity, other species and our shared home. But for that to happen, these technologies would need to be deployed inside a vastly different economic and social order than our own, one that had as its purpose the meeting of human needs and the protection of the planetary systems that support all life.”

By facilitating the concentration of wealth in the hands of a few, AI is certainly not a neutral technology. It has become abundantly clear in recent months—despite heroic efforts to negotiate and adopt acts, treaties, and guidelines—that our economic and social order is neither ready nor willing to embrace the seriousness of intent necessary to put in place the critical measures needed.

In the rush to roll out generative AI models and technologies, without sufficient guardrails or regulations, individuals are no longer seen as human beings but as datapoints, feeding a broader machine of efficiency to reduce cost and any need for human contributions. In this way, AI threatens to enable both the banality and the radicality of evil, and potentially fuels totalitarianism. Any tools created to replace human capability and human thinking, the bedrock upon which every civilization is founded, should be met with skepticism—those enabling totalitarianism, prohibited, regardless of the potential profits, just as other scientific advances causing harm have been.

All of this is being pursued by good people, with good intentions, who are just fulfilling the tasks and goals they have taken on. Therein lies the banality which is slowly being transformed into radical evil.

Leaders in industry speak of risks that could potentially threaten our very existence, yet seemingly make no effort to contemplate that maybe we have reached the breaking point. Is it enough? Have we reached that breaking point that Arendt so aptly observed, where the good becomes part of what later manifests as radical evil?

In numerous articles, we have lamented talk of futuristic existential risks as a distraction from attending to near-term challenges. But perhaps fear of artificial general intelligence is a metaphor for the evil purposes for which AI is and will be deployed.

As we have also pointed out in the recent year, it is essential to pay attention to what is not being spoken about through curated narratives, social silences, and obfuscations. One form of obfuscation is “moral outsourcing.” While also referring to Arendt’s banality of evil in a 2018 TEDx talk, Rumman Chowdhury defined “moral outsourcing” as “The anthropomorphizing of AI to shift the blame of negative consequences from humans to the algorithm.” She notes, “[Y]ou would never say ‘my racist toaster’ or ‘my sexist laptop’ and yet we use these modifiers in our language about artificial intelligence. In doing so we’re not taking responsibility for actions of the products that we build.”

Meredith Whittaker, president of Signal, recently opined in an interview with Meet the Press Reports that current AI systems are being “shaped to serve” the economic interest and power of a “handful of companies in the world that have that combination of data and infrastructural power capabilities of creating what we are calling AI from nose to tip.” And to believe that “this is going to magically become a source of social good . . . is a fantasy used to market these programs.”

Whittaker’s statements are in stark contrast to those of Eric Schmidt, former Google CEO and president of Alphabet and former chair of the U.S. National Security Commission on AI. Schmidt posits that as these technologies become more broadly available, companies developing AI should be the ones to establish industry guardrails “to avoid a race to the bottom”—not policymakers, “because there is no way a non-industry person can understand what is possible. There is no one in the government who can get it right. But the industry can roughly get it right and then the government can put a regulatory structure around it.” The lack of humility on the part of Schmidt should make anyone that worries about the danger of unchecked, concentrated power cringe.

The prospect that those who stand to gain the most from AI may play a leading role in setting policy towards its governance is equivalent to letting the fox guard the henhouse. The AI oligopoly certainly must play a role in developing safeguards but should not dictate which safeguards are needed.

Humility, by leaders across government and industry, is key to grappling with the many ethical tension points and mitigating harms. The truth is, no one fully understands what is possible or what can and cannot be controlled. We currently lack the tools to test the capabilities of generative AI models, and do not know how quickly those tools might substantially become more sophisticated, nor whether the continuing deployment of ever-more-advanced AI will rapidly exceed any prospect of understanding and controlling those systems.

The tech industry has utterly failed to regulate itself in a way that is demonstrably safe and beneficial, and decision-makers have been late to step up with workable and timely enforcement measures. There have been many discussions lately on what mechanisms and level of transparency are needed to prevent harm at scale. Which agencies can provide necessary and independent scientific oversight? Will existing governance frameworks suffice, and if not, it is important to understand why. How can we expedite the creation of new governance mechanisms deemed necessary while navigating inevitable geopolitical skirmishes and national security imperatives that could derail putting effective enforcement in place? What exactly should such governance mechanisms entail? Might technical organizations play a role with sandboxes and confidence building measures?

Surely, neither corporations, investors, nor AI developers would like to become the enablers of “radical evil.” Yet, that is exactly what is happening—through obfuscation, clandestine business models, disingenuous calls for regulations when they know they already have regulatory capture, and old-fashioned covert advertisement tactics. Applications launched into the market with insufficient guardrails and maturity are not trustworthy. Generative AI applications should not be empowered until they include substantial guardrails that can be independently reviewed and restrain both industry and governments alike from effectuating radical evil.

Whether robust technological guardrails and policy safeguards can or will be forged in time to protect against undesirable or even nefarious uses remains unclear. What is clear, however, is that humanity’s dignity and the future of our planet should not be in service of the powers that be or the tools we adopt. Unchecked technological ambitions place humanity on a perilous trajectory.

This article was first published by the Carnegie Council for Ethics in International Affairs.